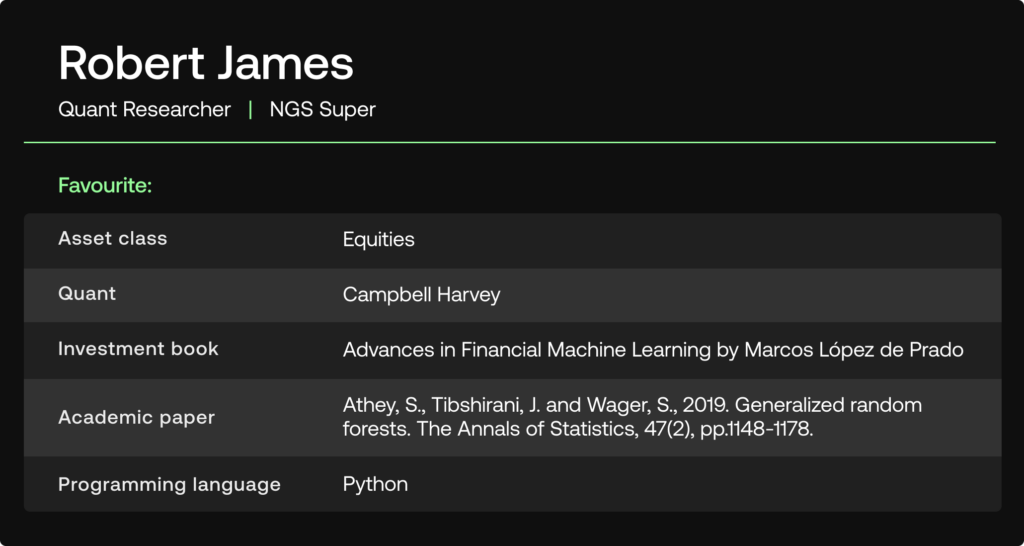

In this edition of SigView – a Quant’s Perspective we gain insights from Robert James, quant researcher at the $13bn Australian Superannuation fund NGS Super. NGS Super is the award-winning super fund for Australia’s education and community sector workers. In our discussion, Robert shares his view on topics such as what makes his quant models unique, the key challenges he faces in his day-to-day research work, and where he currently sees the best alpha opportunities. In addition to his work at NGS Super, Robert is a postdoctoral research fellow at the University of Sydney.

We hope you enjoy the conversation!

Tell us about your role at NGS Super?

I am a quantitative researcher within the portfolio construction and quantitative research team at NGS super. I am responsible for building some of our quantitative models and analysing the data which we use to form our views about future expected returns and market movements.

What kind of investment strategies and markets do you focus on?

I am mostly focused on equities, both international and Australian. The team that I work in is responsible for the strategic and dynamic asset allocation decisions at the fund. We have also recently started thinking about new approaches to implement short-term tactical overlays to our portfolios.

What is unique about your quant models and investment strategies?

I think that we have quite a strong academic focus embedded into our quant models. We are always trying to ensure that our approach is well grounded in both economic and statistical theory. Since both myself and other members of the team have PhDs in various fields we enjoy reading, implementing and adapting techniques published in academic papers to our modelling tasks at hand. At the fund we also have a big focus on ESG and responsible investing, as demonstrated by our goal to be carbon neutral by 2030.

What are the key challenges you face in your day-to-day operations as a quant researcher?

Data availability, quality and seamless delivery are always a key challenge in building a good quantitative model. This is certainly an area where platforms such as SigTech can help tremendously. Moreover, because we do not have an unlimited budget, we constantly need to make sure that we are using data and variables that provide the best value and are fit for the models that we want to design. For example, it is often necessary to screen variables that we might get from different data providers to ensure that they will likely have a good relationship with our target variable of interest and that they do not provide information that is already embedded in another dataset that we are using. Finally, we need to always be careful that any model we design is not overfitting noisy financial data and that any performance analytics and backtesting associated with a model are done using best practice approaches.

What are the latest technological advancements that you are making use of?

Since internal quantitative research and modelling is reasonably new to the fund we have aimed to start out by using well established technology and methods that are fit for purpose. This then provides us with a solid foundation to continue building and developing more complex and state-of-the-art approaches. I think it is fair to say that SigTech is one of the more modern technologies that we are using at the fund. Also, in terms of statistical technologies, something I have investigated implementing are a number of recent model free variable screening methods for high dimensional data.

In your academic work, what are you currently focusing on?

I have just published a few of the papers that I wrote during my time as a PhD student, for example one that explores a new approach to detecting illegal trading and one paper that proposed a regularized extension to a well-established risk forecasting model. I am currently starting to explore better strategic portfolio rebalancing approaches, the behaviour of different machine learning models and paradigms for stock return direction forecasting, and applications of optimal transport theory.

Are there any specific datasets that you currently find particularly interesting to research?

I am finding that novel transformations and aggregations of some variables obtained from public economic data sources can be particularly useful. We have also been exploring the utility of some of the more complex signals that we receive from external third-party providers. These signals are typically composites which could help to reduce the size of the feature space that a model might need to make accurate predictions.

Where do you see the most promising opportunities to generate alpha in the current market environment?

Approaches that can exploit any predictable behaviour during elevated volatility regimes could be interesting right now. An understanding of the expected market behaviour during peak interest rate periods could also be helpful to formulate alpha generating strategies. Certain fixed income investments may also be starting to look more attractive, particularly in terms of possible diversification benefits.

What is next on your research agenda as well as on the technology and data side?

In general, the team is exploring ways to better augment our decision-making processes with data driven quant models. I have started exploring applications of optimal transport theory for certain portfolio management tasks. I’m also interested in exploring new financial risk forecasting approaches that use machine learning techniques. Other ideas include applications of stochastic frontier models for benchmarking. On the technology side I think implementing best practice MLOPS techniques will also become increasingly important for us going forward.

Disclaimer

SigTech is not responsible for, and expressly disclaims all liability for, damages of any kind arising out of use, reference to, or reliance on such information. While the speaker makes every effort to present accurate and reliable information, SigTech does not endorse, approve, or certify such information, nor does it guarantee the accuracy, completeness, efficacy, timeliness, or correct sequencing of such information. All presentations represent the opinions of the speaker and do not represent the position or the opinion of SigTech or its affiliates.