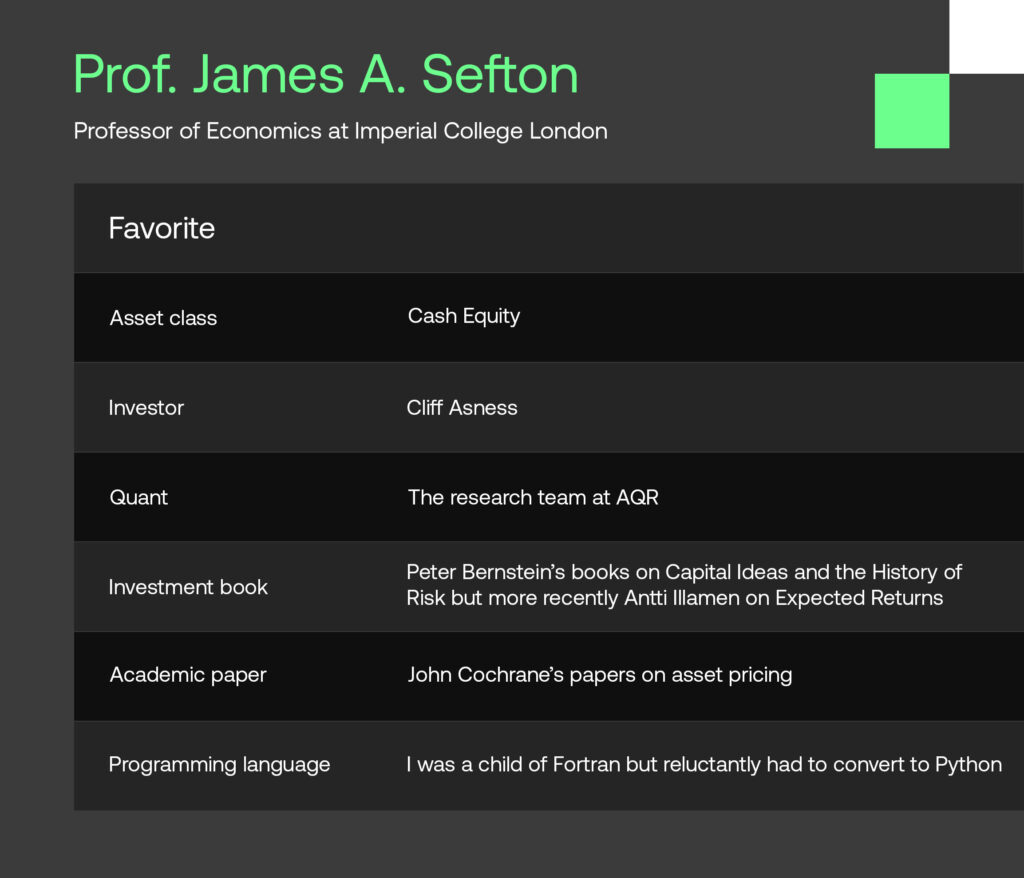

We are excited to feature Professor James Sefton and his valuable insights in this edition of SigView. James has had a distinguished career as a quant practitioner at UBS and Winton Capital Management, where he held the position of Principal Scientist. Currently, he serves as a Professor of Economics at Imperial College Business School. In the interview, he shares insights into his core principles for building quant models and the most common mistakes observed among quants. Additionally, he provides his perspective on technological advancements such as AI and machine learning, as well as alternative data.

We hope you will enjoy the conversation!

Tell us about your background as a quant.

I came to quant investing in a roundabout way. As a student, I was a keen mathematician, with an interest in economics and politics. These interests pulled me via a PhD in System theory to a stint as a macro-modeller at NIESR, to, in 1997, the UBS quant equity research desk to manage their input/output or supply chain model. I therefore landed by sheer chance in one of the strongest (if not the strongest) equity research hubs in the City during the heyday of quant investing. Sometimes the dice roll for you!

Quant equity research was different back then. There was a deep sense of community, a sense of excitement that we held the keys to a rigorous, almost fail safe approach to systematic investing. Research was shared relatively openly because, I guess, in the background there was a feeling that there was more than enough to go around.

This was a productive time, and I published papers on risk modeling, quant strategies, forecasting earnings and portfolio construction; and marketed these to UBS’s clients, BGI, Fidelity, GIC as well as European pension and hedge funds. In 2006, I decided, rather rashly as it turned out, I wanted to try and be the portfolio manager and not the researcher. I took on a role as Principal Scientist at Winton Capital Management to develop a European long-short equity equity fund. However, the financial crisis of 2007-2008 was a turning point — it exposed the vulnerabilities of many quant strategies. I found out the hard way that the markets can be ruthless and being a portfolio manager is harder than being a researcher. This prompted a period of introspection and reassessment.

Following a brief return to UBS, I was drawn back to academia. Now, as a Professor of Economics at Imperial College Business School, I’ve found an opportunity to delve back into research. It’s a gratifying return to the shared sense of purpose and collaboration I initially admired in the quant community.

What are your core principles when building quant models?

When it comes to building quant models, I adhere to a few core principles. The first guide is the KISS principle – ‘Keep It Simple, Stupid.’ A quant process can so easily stray into overcomplexity. There is an art to building a simple, robust, and transparent model, that can be extended if necessary without comprising the core structure.

The second principle is succinctly captured by Professor Bill Sharpe, “The optimal investment process proceeds from sensible assumptions, and is broadly consistent with the data. You want to avoid approaches that have no basis in theory and blindly say, ‘It seems to have worked in the past, so it will work in the future’.” So premise your process on sound foundations; any equity quant strategy needs to be grounded on the core ‘risk premia’ factor signals. While the emphasis on these core strategies has shifted over time, they remain the fundamental building blocks of any model.

Thirdly , though related, always try and run a new strategy as an independent portfolio first. This offers clear advantages, such as making it easier to monitor trading costs and performance and allows for more flexibility when it comes to portfolio construction (such as concentration, turnover, volatility targeting and market neutrality).

What are the most common mistakes you’ve observed quant researchers doing?

I fear I will repeat myself here. Quantitative research has a tendency to fall prey to data-mining and overcomplexity. Simpler models, grounded in solid fundamentals, almost always outperform their intricate counterparts. Complex models aren’t just challenging to interpret; they’re also typically more susceptible to errors and instability.

Quant researchers can over-rely on portfolio optimisers. While understanding risk exposure is vital, simpler portfolio construction rules can be more robust, and reduce unnecessary trading.

Lastly, have faith in your research, particularly during performance slumps. It is crucial to resist the urge to adjust models after brief underperformance. In quant research, consistency and patience are paramount.

In your academic work in quant finance, what is currently at the top of your research agenda?

As an academic in quantitative finance, my focus is often on the novelty of research, as opposed to its immediate practical application. I’m currently working on two papers; one explores dynamic portfolio optimization with costs, aiming to understand the impact of transaction costs on portfolio optimization over time, while the other focuses on designing optimal target-dated life-cycle funds, a crucial component in retirement planning.

Additionally, I have a keen interest in the potential applications of machine learning in the design of quant strategies. The combination of machine learning and quantitative finance offers a myriad of opportunities for innovative research and it’s an area I’m eager to delve further into but as yet have not found ‘a research opening’.

Which essential research topics and applications in quantitative finance do you have your students currently focus on?

Iterating again, I firmly believe in the necessity of grounding research and the investment process in theory. I guide my students to start their inquiries with a clearly defined hypothesis, testing it in its simplest form before adding complexity. If initial tests fail, it’s often more productive to shift focus rather than persist with an idea that lacks robust underpinnings.

Simultaneously, I promote a culture of learning from my students. Their fresh perspectives and enthusiasm breathe life into this field, countering any tendency to ‘weariness’. Not every one of their ideas comes to fruition, in fact very few do, but those that do underscore the power of innovative thinking in quantitative finance.

In essence, I foster an academic environment blending solid theoretical foundations with an openness to new ideas. This balanced approach not only provides a firm base for research but also nurtures innovation, preparing students to adapt and thrive in this dynamic field of finance.

What is your perspective on technological advancements such as AI and machine learning, as well as alternative data? Are we currently experiencing a paradigm shift?

In my perspective, AI, machine learning, and alternative data are prompting an evolution, but not a paradigm shift in the world of finance. ‘Old school’ models remain relevant, but ignore these innovations at your peril.

Currently machine learning has shown promise in fine-tuning ‘meta-parameters’ in core processes, such as adjusting look-back periods and modifying leverage. But I am not aware of the ‘discovery’ of new signals that have a decay rate greater than a few days.

Alternative data is largely a ‘big-fund’ game due to substantial fixed costs and its applicability to only a small investment universe (or a small number of securities). To create an investment portfolio requires the combination of the output from these many datasets with a core quant process. I am not sure of any fund – at least I am not aware of one – that has managed to do this effectively yet.

In a nutshell, these technologies bring forth exciting possibilities, but I believe we are still in the early stages of truly understanding their full potential and how they can be integrated into existing quant models. It may not be a paradigm shift just yet, but it does feel we are standing on the brink of significant changes.

How has the acceptance of quant investing among institutional investors changed over the past two decades? And, how do you see this trend developing?

Reflecting on my experience, I can say that quant investing has certainly evolved significantly over the past two decades. What was once a straightforward approach promising consistent outperformance has now fractured into a much more intricate landscape.

From my perspective, the old style quant investing has turned into a ‘poor man’s’ active management. Its relative affordability has broadened its appeal, leading to a large degree of commoditization.

On the flip side, the influx of groundbreaking developments, notably in the fields of alternative data, machine learning, and natural language processing is labor-, infrastructure- and capital intensive. These state-of-the-art tools, though still in their infancy of application, are the ‘high-end’ of quant investing.

As I look towards the future, the trajectory of these trends is uncertain. However, considering the rapid pace of technological advancements and the growing access to sophisticated data, it’s challenging to discount their potential influence. As we move ahead, I believe the landscape of quant investing will continue to evolve and unveil new opportunities for those who can adapt.

What resources do you recommend for staying informed about the latest advancements in quantitative finance?

Well, first off, you’re always welcome to attend my lectures! I promise they’re not as dry as they sound. That’s a bit of a jest, of course, but there’s truth in the idea that academic settings can often provide valuable insights into the latest in the field.

Another invaluable resource is networking. Engaging in conversations at conferences and meetings often leads to learning about the most recent developments and innovative ideas in quantitative finance.

Lastly, I’d recommend the Social Science Research Network, or SSRN. It’s a vast repository of the latest research across a multitude of disciplines, including quantitative finance. However, given the sheer volume of content, sifting through to find the most valuable papers can be quite time-consuming. I haven’t yet found a foolproof method for separating the wheat from the chaff, other than sharing with others.

In this rapidly changing field, I firmly believe in the importance of staying updated and interconnected. Leveraging these resources, along with nurturing a healthy dose of curiosity, has been invaluable in helping me keep pace with the latest advancements in quantitative finance.

Disclaimer

SigTech is not responsible for, and expressly disclaims all liability for, damages of any kind arising out of use, reference to, or reliance on such information. While the speaker makes every effort to present accurate and reliable information, SigTech does not endorse, approve, or certify such information, nor does it guarantee the accuracy, completeness, efficacy, timeliness, or correct sequencing of such information. All presentations represent the opinions of the speaker and do not represent the position or the opinion of SigTech or its affiliates.